Modern Data Warehousing: A Complete Guide for 2025

Discover how modern data warehousing has evolved in 2025. Learn about cloud-native architectures, real-time processing, and best practices for building scalable data infrastructure that powers business intelligence.

Modern Data Warehousing: A Complete Guide for 2025

Data warehousing has undergone a revolutionary transformation. In 2025, successful organizations are leveraging cloud-native architectures, real-time processing, and AI-driven insights to create data infrastructures that truly drive business value. This guide will help you navigate the modern data warehousing landscape.

The Evolution of Data Warehousing in 2025

Traditional data warehouses were built for a different era. Today's data landscape demands:

- Real-time insights instead of batch processing delays

- Flexible schemas that adapt to changing business needs

- Cost-effective scaling that grows with your data volume

- Self-service analytics that empower business users

Traditional vs. Modern Data Warehousing

| Aspect | Traditional Approach | Modern 2025 Approach |

|---|---|---|

| Architecture | On-premises, rigid | Cloud-native, flexible |

| Data Processing | Batch (daily/weekly) | Real-time + batch |

| Schema Design | Schema-on-write | Schema-on-read |

| Scaling | Vertical (expensive) | Horizontal (cost-effective) |

| User Access | IT-dependent | Self-service enabled |

| Cost Model | Fixed infrastructure | Pay-as-you-use |

Core Components of Modern Data Warehouses

1. Data Ingestion Layer

Purpose: Collect data from multiple sources efficiently

Key Technologies:

- Streaming Platforms: Apache Kafka, Amazon Kinesis

- ETL/ELT Tools: Fivetran, Stitch, Apache Airflow

- Real-time Connectors: Database CDC, API integrations

Best Practices:

- Prioritize real-time ingestion for critical business data

- Implement data validation at ingestion point

- Use schema evolution to handle changing data structures

2. Storage Layer

Purpose: Store data efficiently and cost-effectively

Modern Storage Options:

| Storage Type | Best For | Cost | Performance |

|---|---|---|---|

| Hot Storage | Frequently accessed data | High | Very Fast |

| Warm Storage | Occasionally accessed data | Medium | Fast |

| Cold Storage | Archive/compliance data | Low | Slower |

Cloud Platforms:

- Amazon Redshift: Mature, SQL-compatible

- Google BigQuery: Serverless, automatic scaling

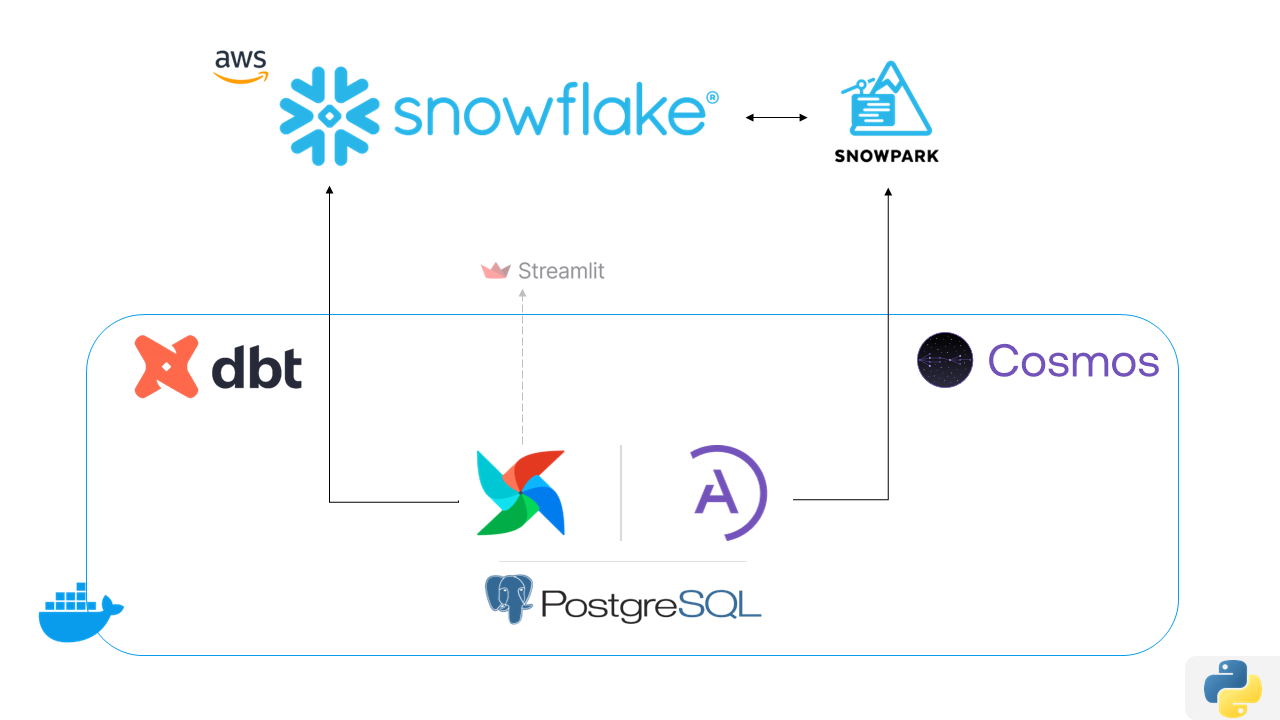

- Snowflake: Multi-cloud, separation of compute/storage

- Azure Synapse: Integrated analytics platform

3. Processing Layer

Purpose: Transform and prepare data for analysis

Processing Types:

- Batch Processing: Large-scale data transformations

- Stream Processing: Real-time data processing

- Micro-batch: Balance between real-time and batch

Modern Tools:

- dbt (Data Build Tool): SQL-based transformations

- Apache Spark: Distributed processing

- Cloud Functions: Serverless processing

4. Serving Layer

Purpose: Deliver data to end users and applications

Access Methods:

- SQL Interfaces: Direct database queries

- APIs: Programmatic data access

- BI Tools: Tableau, Power BI, Looker

- Data Catalogs: Self-service data discovery

Architectural Patterns for 2025

1. Lake House Architecture

Combines the best of data lakes and data warehouses

Benefits:

- Store structured and unstructured data together

- Reduce data movement and duplication

- Support both analytics and AI/ML workloads

Implementation:

Raw Data flows to Data Lake, then to Curated Zones, then to Data Warehouse, and finally to Analytics

2. Multi-Cloud Strategy

Avoid vendor lock-in and optimize for different workloads

Approach:

- Primary Cloud: Main data warehouse platform

- Secondary Cloud: Disaster recovery and specific workloads

- Data Synchronization: Keep critical data synchronized

3. Real-Time Analytics Architecture

Enable immediate insights from streaming data

Components:

- Stream Processing: Process data as it arrives

- In-Memory Storage: Fast access to recent data

- Historical Integration: Combine real-time with historical data

Data Modeling for Modern Warehouses

Dimensional Modeling Evolution

Traditional Star Schema:

- Fact tables with dimension tables

- Optimized for specific queries

- Rigid structure

Modern Approach:

- Data Vault 2.0: Agile, scalable modeling

- Anchor Modeling: Handle temporal data effectively

- Hybrid Models: Combine approaches based on use case

Schema Design Strategies

| Strategy | When to Use | Pros | Cons |

|---|---|---|---|

| Star Schema | Well-defined requirements | Fast queries, familiar | Rigid, hard to change |

| Snowflake Schema | Complex hierarchies | Normalized, space efficient | Complex joins |

| Data Vault | Agile environments | Flexible, auditable | Learning curve |

| One Big Table | Simple analytics | Easy to understand | Can be inefficient |

Implementation Roadmap

Phase 1: Foundation (Months 1-3)

Objectives:

- Assess current data landscape

- Choose cloud platform and tools

- Set up basic infrastructure

Key Activities:

- Data Inventory: Catalog all data sources

- Platform Selection: Choose based on requirements and budget

- Pilot Project: Start with one critical data source

- Team Training: Build internal capabilities

Success Metrics:

- First data pipeline operational

- Team trained on new platform

- Basic reporting available

Phase 2: Core Implementation (Months 4-8)

Objectives:

- Migrate critical data sources

- Implement data governance

- Enable self-service analytics

Key Activities:

- Data Migration: Move priority datasets

- Data Quality: Implement monitoring and validation

- Security Setup: Access controls and encryption

- User Training: Enable business users

Success Metrics:

- 80% of critical data migrated

- Data quality dashboard operational

- Business users creating reports

Phase 3: Advanced Features (Months 9-12)

Objectives:

- Add real-time capabilities

- Implement AI/ML integration

- Optimize performance and costs

Key Activities:

- Real-time Streams: Add streaming data sources

- ML Integration: Connect to AI/ML platforms

- Performance Tuning: Optimize queries and costs

- Advanced Analytics: Enable predictive analytics

Success Metrics:

- Real-time dashboards available

- ML models using warehouse data

- 30% cost optimization achieved

Best Practices for Success

Data Quality Management

Implement comprehensive data quality processes:

- Data Profiling: Understand your data characteristics

- Validation Rules: Automated quality checks

- Monitoring: Continuous data quality monitoring

- Alerting: Immediate notification of quality issues

Quality Metrics to Track:

- Completeness: Percentage of non-null values

- Accuracy: Correctness of data values

- Consistency: Data consistency across sources

- Timeliness: Data freshness and availability

Performance Optimization

Query Performance:

- Use appropriate indexing strategies

- Implement query result caching

- Optimize table partitioning

- Monitor and tune slow queries

Cost Optimization:

- Implement data lifecycle policies

- Use appropriate storage tiers

- Monitor and optimize compute usage

- Set up cost alerts and budgets

Security and Compliance

Security Measures:

- Encryption: At rest and in transit

- Access Controls: Role-based permissions

- Auditing: Comprehensive access logging

- Network Security: VPCs and firewalls

Compliance Considerations:

- GDPR: Data privacy and right to deletion

- SOX: Financial data controls

- HIPAA: Healthcare data protection

- Industry Standards: Sector-specific requirements

Common Challenges and Solutions

Challenge 1: Data Silos

Problem: Data scattered across multiple systems

Solution:

- Implement data integration platform

- Create unified data catalog

- Establish data governance policies

Challenge 2: Slow Query Performance

Problem: Queries taking too long to execute

Solution:

- Analyze query patterns and optimize

- Implement proper indexing

- Use materialized views for common queries

- Consider query result caching

Challenge 3: Rising Costs

Problem: Cloud data warehouse costs growing unexpectedly

Solution:

- Implement cost monitoring and alerting

- Use auto-scaling and auto-suspend features

- Optimize storage with lifecycle policies

- Regular cost review and optimization

Technology Selection Guide

Choosing the Right Platform

For Small to Medium Businesses:

- Google BigQuery: Serverless, easy to start

- Amazon Redshift Serverless: Managed scaling

- Snowflake: User-friendly, good support

For Large Enterprises:

- Snowflake: Multi-cloud, enterprise features

- Amazon Redshift: AWS ecosystem integration

- Azure Synapse: Microsoft ecosystem integration

For Real-Time Requirements:

- Google BigQuery: Streaming inserts

- Amazon Redshift: Real-time ingestion

- Databricks: Unified analytics platform

Tool Ecosystem Integration

Essential Tools for Modern Data Warehousing:

| Category | Tool Options | Purpose |

|---|---|---|

| Data Integration | Fivetran, Stitch, Airbyte | Connect data sources |

| Transformation | dbt, Dataform, Matillion | Transform and model data |

| Orchestration | Apache Airflow, Prefect | Workflow management |

| Monitoring | Monte Carlo, Great Expectations | Data quality monitoring |

| Visualization | Tableau, Power BI, Looker | Business intelligence |

Future Trends and Preparation

Emerging Technologies

Prepare for the next wave of innovation:

AI-Powered Data Management

- Automated data cataloging

- Intelligent query optimization

- Predictive data quality monitoring

Edge Computing Integration

- Process data closer to sources

- Reduce latency for real-time applications

- Hybrid cloud-edge architectures

Quantum-Ready Security

- Post-quantum encryption methods

- Advanced security protocols

- Future-proof data protection

Skills Development

Build capabilities for the future:

- Technical Skills: Cloud platforms, modern SQL, Python

- Data Governance: Privacy, compliance, ethics

- Business Acumen: Understanding data's business impact

- Soft Skills: Communication, change management

Measuring Success

Key Performance Indicators

Technical Metrics:

- Query Performance: Average query response time

- Data Freshness: Time from source to availability

- System Uptime: Availability percentage

- Cost Efficiency: Cost per query or per GB processed

Business Metrics:

- Time to Insight: From question to answer

- User Adoption: Number of active users

- Decision Speed: Faster business decisions

- Revenue Impact: Data-driven revenue growth

ROI Calculation

Cost Factors:

- Platform and tool licensing

- Infrastructure and compute costs

- Personnel and training costs

- Migration and setup costs

Benefit Factors:

- Improved decision-making speed

- Reduced manual reporting effort

- Better customer insights

- Operational efficiency gains

ROI Formula:

ROI = (Benefits - Costs) / Costs x 100%

Conclusion

Modern data warehousing in 2025 is about building flexible, scalable, and intelligent data infrastructure that adapts to your business needs. Success comes from choosing the right technologies, implementing best practices, and continuously optimizing your approach.

The organizations that will thrive are those that view their data warehouse not just as a storage system, but as a strategic asset that enables data-driven decision-making across the entire organization.

Start with a clear strategy, implement incrementally, and always keep the end user experience in mind. Your modern data warehouse should empower everyone in your organization to make better decisions with data.

Tags

UpthriveAI Team

Our expert team at UpthriveAI specializes in data warehousing, AI/ML solutions, and cloud technologies. We help organizations transform their data into actionable insights and competitive advantages.

Get in TouchRelated Articles

Let's Discuss Your Data Journey

Ready to unlock the full potential of your data? Our experts are here to help you design and implement the perfect solution for your business needs.

What to Expect

- Free initial consultation and data assessment

- Custom solution design tailored to your needs

- Transparent pricing with no hidden costs

Our team typically responds to inquiries within 4 hours during business hours.

What you'll get:

- Weekly data engineering insights and tutorials

- Latest AI and machine learning trends

- Industry case studies and best practices

- Early access to our blog posts and resources